Paper Review: Personalized Audiobook Recommendations at Spotify Through Graph Neural Networks

Spotify has recently expanded its offerings to include audiobooks, presenting challenges in personalized recommendation due to the inability to skim audiobooks before purchase, data scarcity with the introduction of a new content type, and the need for a fast, scalable model. To overcome these obstacles, Spotify developed a novel recommendation system 2T-HGNN consisting of Heterogeneous Graph Neural Networks and a Two Tower model.

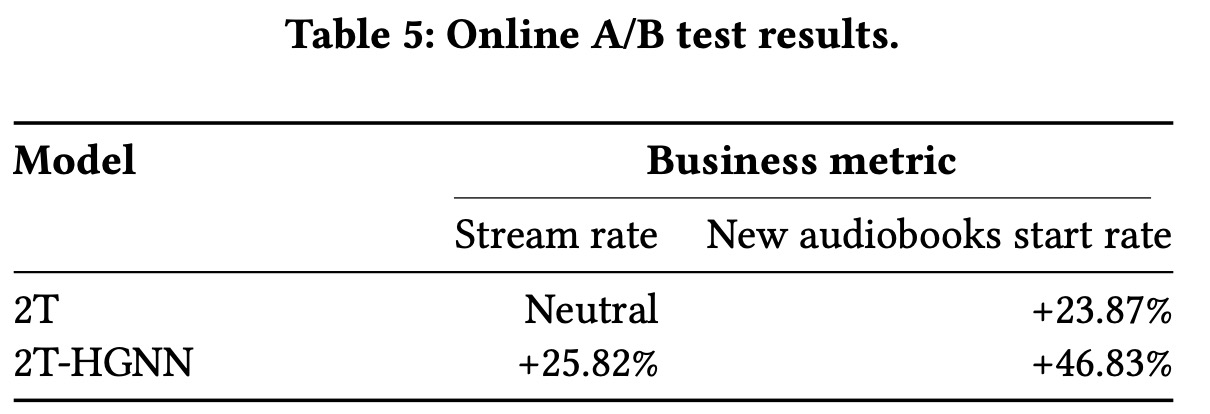

By decoupling users from the HGNN graph and using a multi-link neighbor sampler, the complexity of the HGNN model is significantly reduced, ensuring low latency and scalability. Empirical evaluations with millions of users demonstrated a substantial improvement in personalized recommendations, resulting in a 46% increase in the rate of starting new audiobooks and a 23% increase in streaming rates. Additionally, the model also positively impacted the recommendation of podcasts, indicating its broader applicability beyond just audiobooks.

Data

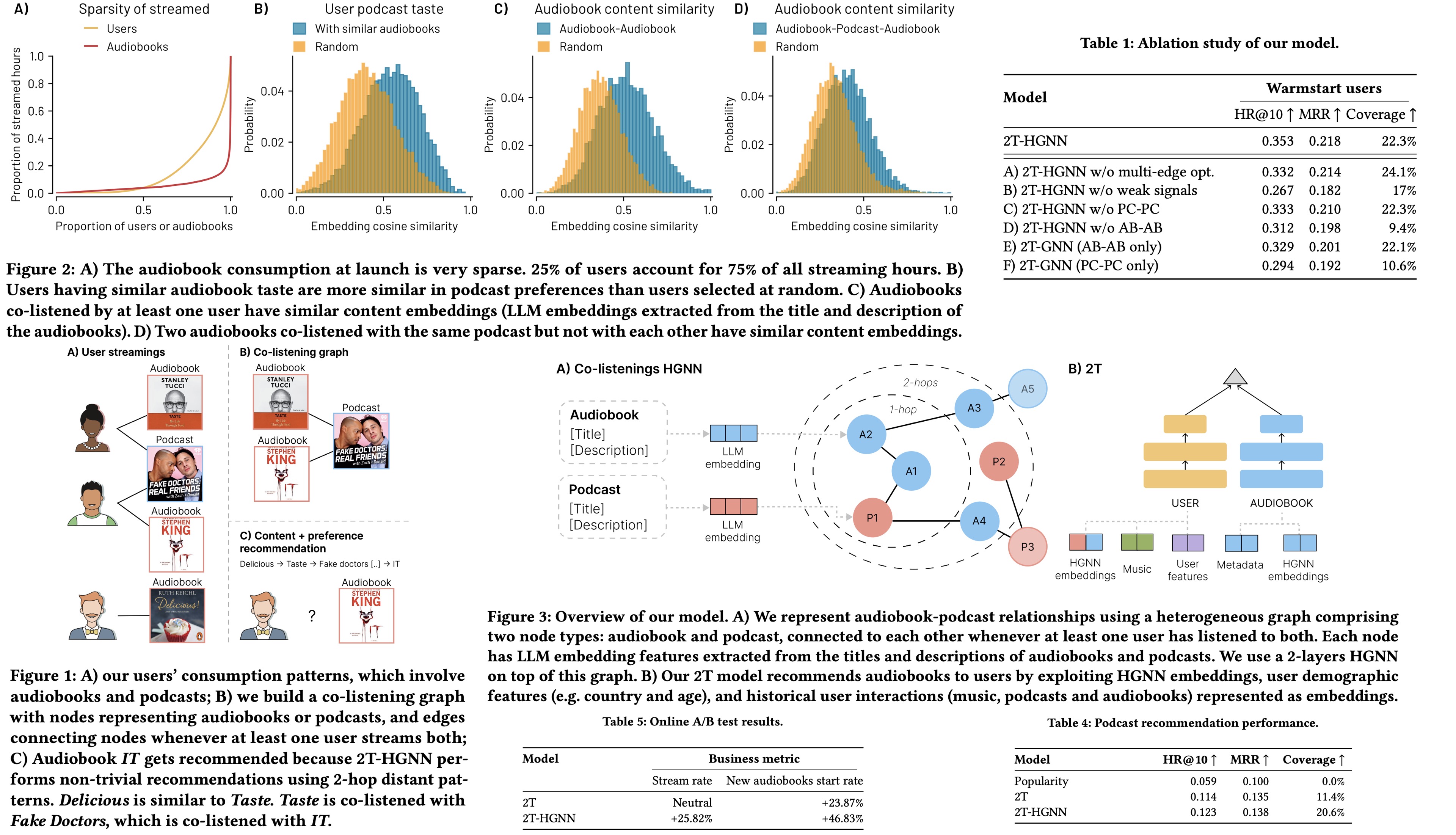

- Audiobook streams are mostly dominated by power users and popular titles: 25% of users account for 75% of all audiobook streaming hours, and the top 20% of audiobooks represent over 80% of streamed hours;

- Podcasts user tastes and content information are informative for inferring users’ audiobook consumption patterns: Most of the audiobook consumers interacted with podcasts before. Users with shared audiobook listening experiences exhibit significantly higher similarity than random pairs. Metadata analysis showed that pairs of audiobooks that at least one user listened to are more similar to each other than random pairs;

- Accounting for podcast interactions with audiobooks is essential for better understanding user preferences: an analysis of a graph with both audiobooks and podcasts showed that audiobooks connected through shared podcasts have a stronger similarity;

- Incorporating weak signals into our model can predict future streams and uncover subtle user preferences and intents: Analysis of over 198 million interactions showed that certain “weak signals” (such as following an audiobook or showing intent to pay) can significantly predict future audiobook streams. The “follow” action, in particular, was a strong indicator of future stream initiation.

Model

Heterogeneous Graph Neural Network

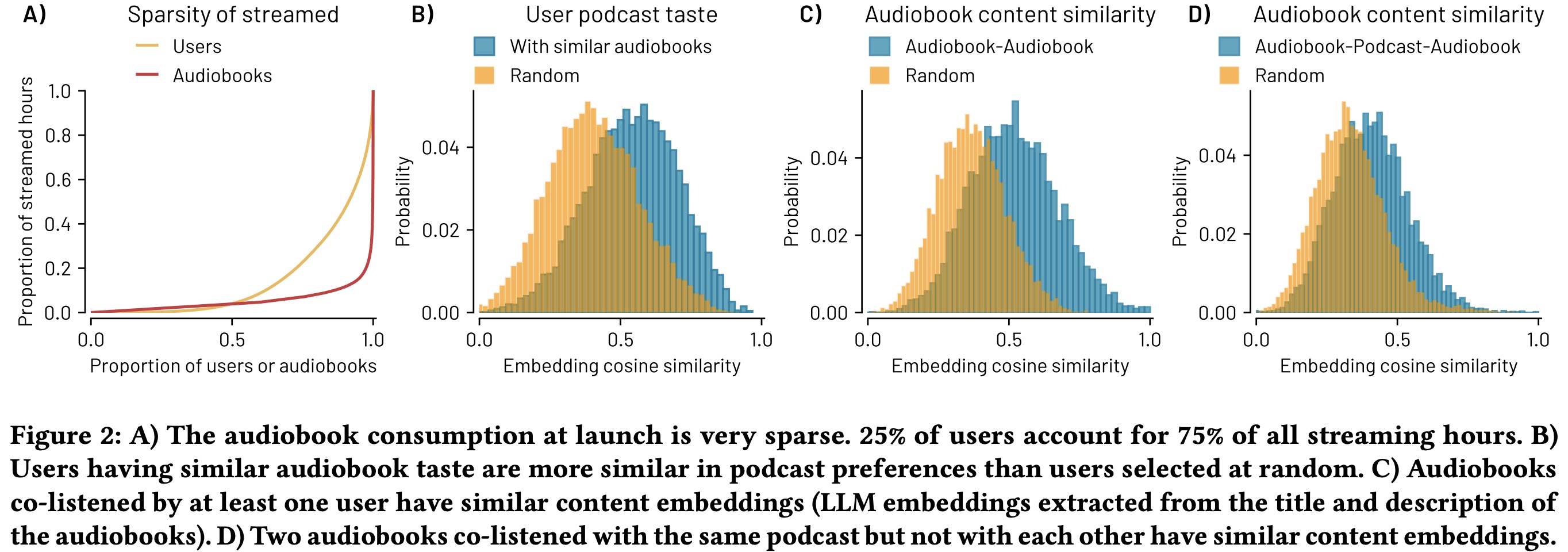

The graph connects audiobooks and podcasts as nodes based on user interactions. Node features are augmented by embeddings from titles and descriptions via multi-language Sentence-BERT, facilitating the HGNN’s learning of complex patterns from both content and user preferences.

The model iteratively updates node features by first aggregating neighbor features based on their relationships, then combining these with the node’s original features across several layers. It normalizes node embeddings for training stability and search efficiency and extends the GraphSAGE framework to handle heterogeneous graphs. HGNN uses a contrastive loss function during training to enhance the similarity of connected node embeddings while distancing those of unconnected nodes, optimizing the network to produce meaningful embeddings reflective of the graph’s structure.

To counteract the imbalance in the co-listening graph, which has more podcast-podcast and audiobook-podcast edges than audiobook-audiobook connections, a multi-link neighborhood sampler was developed. By undersampling the majority edge types and selecting equal numbers of audiobook-audiobook and audiobook-podcast connections, it ensures diverse and comprehensive training data coverage across epochs.

Two Tower

The 2T-HGNN model uses Two Tower structure to enhance user and audiobook representation by combining deep neural networks, one for users and another for audiobooks. The user tower inputs include demographic information and historical interactions with music, audiobooks, and podcasts—the latter two being represented by averaged HGNN embeddings from recent interactions. Additionally, it incorporates streams and “weak signals” like follows and previews. The audiobook tower processes metadata such as language and genre, along with embeddings from titles and descriptions, and the specific HGNN embedding for each audiobook.

The model produces separate output vectors for users and audiobooks, optimizing a loss function that aligns user vectors closer to audiobooks they’ve engaged with while distancing them from unrelated audiobook vectors.

2T-HGNN Recommendations

The 2T-HGNN model generates user and audiobook vectors daily for personalized recommendations. Each day begins with training the HGNN model to update podcast and audiobook embeddings, which are then used to train the 2T model. After training, audiobook vectors are created, and a Nearest Neighbor index is built for real-time recommendations. For now, a brute-force search is used for the relatively small audiobook catalog, with plans to switch to an approximate k-NN index for efficiency as the catalog grows. User vectors are generated on-the-fly to ensure recommendations are up-to-date, especially for new users, with a latency target under 100 ms.

The HGNN can produce embeddings for new or unstreamed audiobooks using only their metadata, allowing for inductive inference.

HGNN models are implemented in PyTorch and optimized with Adam using a two-layer architecture. The 2T model, built in TensorFlow, includes three fully connected layers in each tower and uses demographic and interaction features for users, alongside metadata and LLM embeddings for audiobooks.

Training is done on a single machine with an Intel 16 vCPU and 128 GB memory.

Experiments

Ablations:

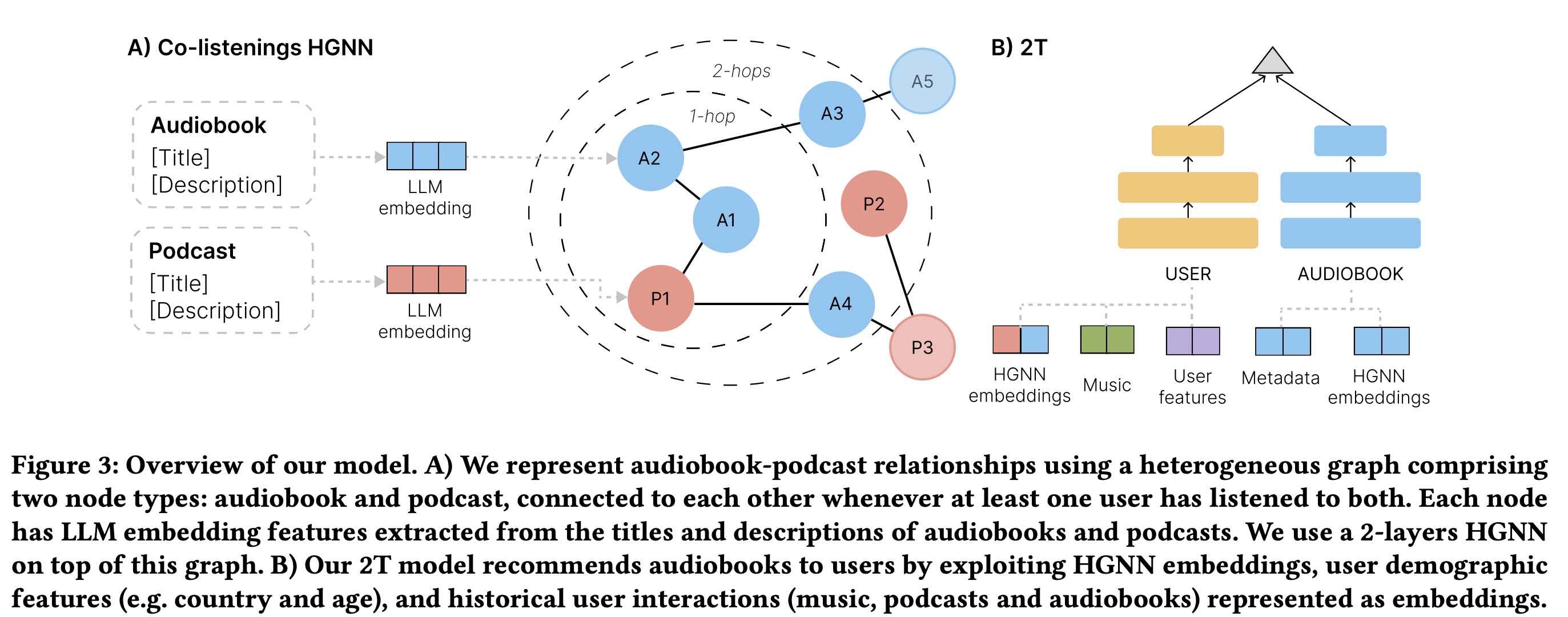

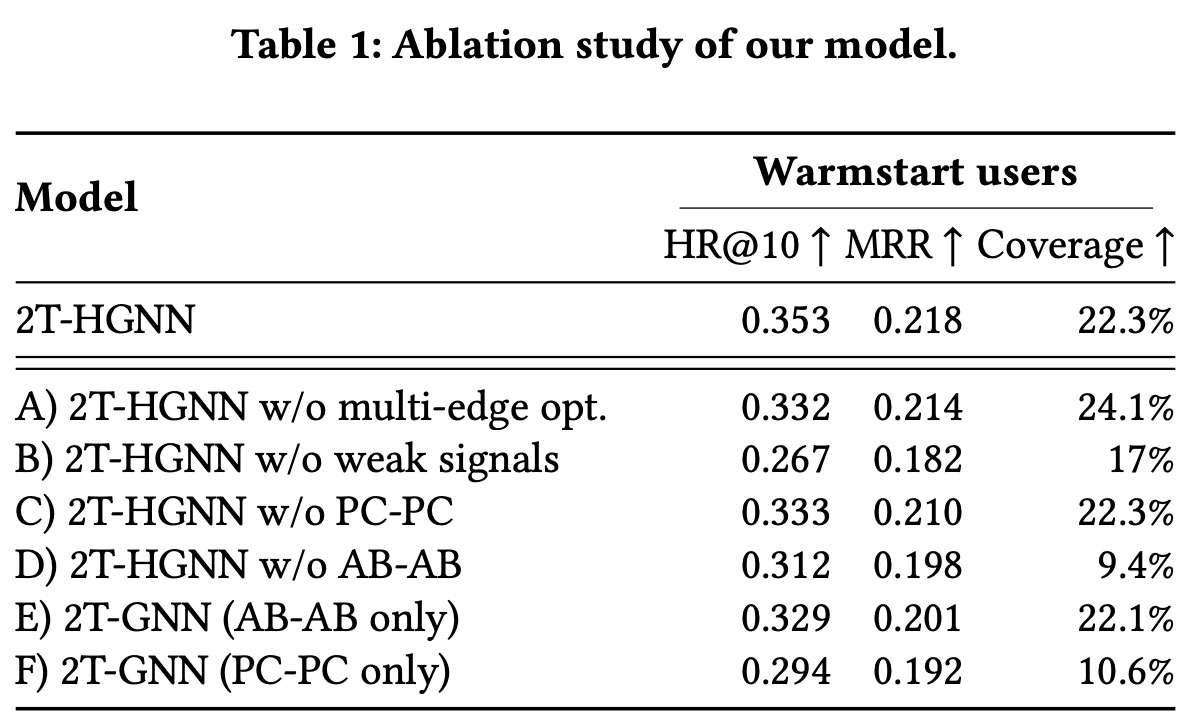

- Removing the balanced multi-link neighborhood sampler led to a 6% decrease in Hit Rate at 10 (HR@10), resulting in a broader range of recommended audiobooks but a struggle to match user relevance;

- Excluding weak signals from training and inference resulted in a substantial drop in HR@10 and coverage, underscoring their importance for effective recommendations;

- Removing podcast-podcast edges decreased HR@10 by 6%, while omitting audiobook-audiobook edges resulted in an 11% HR@10 reduction and a 57% decrease in coverage;

- Using a homogeneous graph solely based on podcast-podcast connections drastically reduced performance, with HR@10, MRR, and coverage experiencing significant declines;

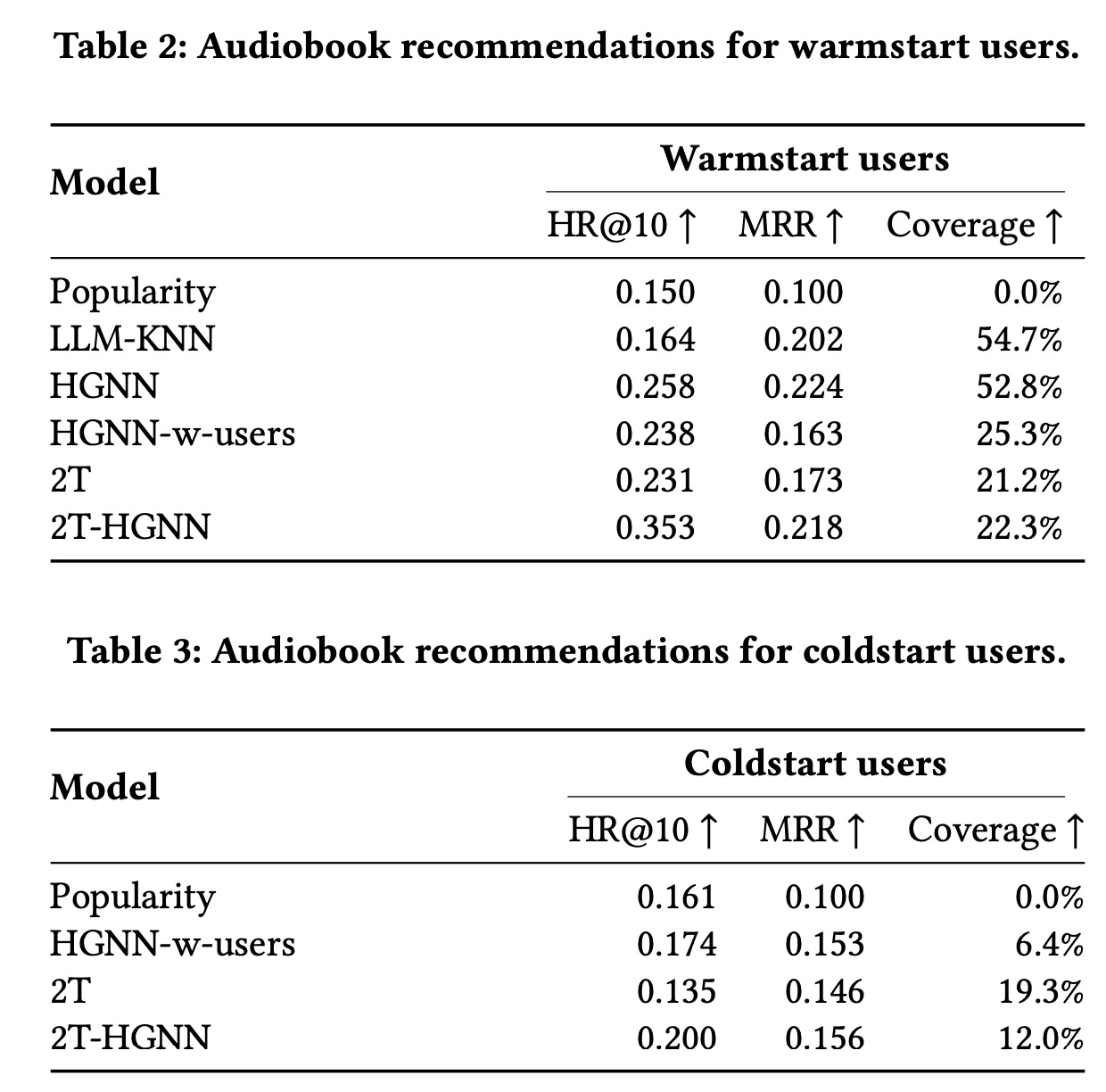

Audiobook recommendation:

- For warmstart users, the HGNN model demonstrates superior performance in HR and MRR, outdoing the LLM-KNN method, which, while showing good coverage and MRR, falls short in personalized recommendation precision. The HGNN model’s ability to capture user preferences through co-listening data significantly contributes to its effectiveness, contrasting with the HGNN-w-users model that suffers from user graph sparsity, leading to lower MRR and coverage.

- The 2T model, despite being less accurate across all metrics compared to HGNN models, offers the advantage of reduced training and inference times. The 2T-HGNN method becomes the most balanced approach, excelling in HR while offering a good compromise between the HGNN’s accuracy and the 2T model’s speed, particularly highlighting its advantage in long-tail content recommendation.

- For coldstart users, the popularity baseline demonstrates a strong bias. The 2T-HGNN and 2T+GNN methods show marked improvements in HR and MRR. While HGNN-w-users struggles with coverage, indicating a narrow recommendation range, the 2T-HGNN significantly improves coverage, albeit still limited compared to the 2T model alone.

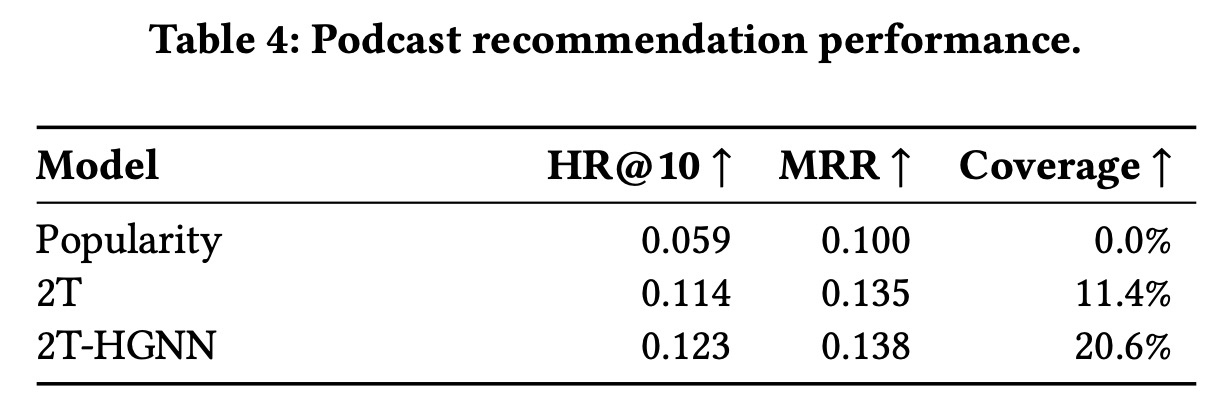

Podcast recommendation:

The integration of audiobooks and podcasts into a single graph for the 2T-HGNN model has significantly enhanced podcast recommendations on an existing online platform that previously only featured podcasts. This approach has not only improved HR@10 by 7% but also remarkably increased coverage by 80% for both warmstart and coldstart users.

Production A/B Experiment:

An A/B test involving 11.5 million monthly active Spotify users compared the online performance of the 2T-HGNN model against the current production model and a 2T model for personalizing audiobook recommendations. Divided into three groups, each experienced recommendations from one of the models. Results demonstrated that the 2T-HGNN model notably improved both the rate at which new audiobooks were started and the overall audiobook streaming rate compared to the other models. The 2T model, while competitive, offered a lesser increase in new audiobook start rates and didn’t significantly affect streaming rates.

paperreview deeplearning recommender gnn